موارد زیر، معنای عملیات تعریف شده در رابط XlaBuilder را شرح میدهد. معمولاً این عملیاتها به صورت یک به یک به عملیات تعریف شده در رابط RPC در xla_data.proto نگاشت میشوند.

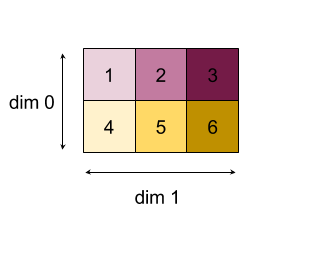

نکتهای در مورد نامگذاری: نوع داده تعمیمیافته XLA با یک آرایه N بعدی سروکار دارد که عناصری از نوع یکنواخت (مانند اعشار ۳۲ بیتی) را در خود نگه میدارد. در سراسر مستندات، از آرایه برای نشان دادن یک آرایه با ابعاد دلخواه استفاده میشود. برای راحتی، موارد خاص نامهای مشخصتر و آشناتری دارند؛ به عنوان مثال، یک بردار یک آرایه ۱ بعدی و یک ماتریس یک آرایه ۲ بعدی است.

برای کسب اطلاعات بیشتر در مورد ساختار یک عملیات، به بخش شکلها و طرحبندی و بخش طرحبندی کاشیکاری شده مراجعه کنید.

شکم

همچنین به XlaBuilder::Abs مراجعه کنید.

در هر عنصر، abs x -> |x| .

Abs(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

برای اطلاعات StableHLO به StableHLO - abs مراجعه کنید.

اضافه کردن

همچنین XlaBuilder::Add ببینید.

جمع lhs و rhs را به صورت عنصر به عنصر انجام میدهد.

Add(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

شکل آرگومانها باید یا مشابه باشند یا سازگار. برای آشنایی با مفهوم سازگار بودن شکلها، به مستندات پخش مراجعه کنید. نتیجه یک عملیات، شکلی دارد که حاصل پخش دو آرایه ورودی است. در این نوع، عملیات بین آرایههایی با رتبههای مختلف پشتیبانی نمیشود ، مگر اینکه یکی از عملوندها اسکالر باشد.

یک نوع جایگزین با پشتیبانی از پخش چندبعدی برای Add وجود دارد:

Add(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| بُعد_پخش | آرایه برش | هر بُعد از شکل عملوند با کدام بُعد در شکل هدف مطابقت دارد؟ |

این نوع عملیات باید برای عملیات حسابی بین آرایههایی با رتبههای مختلف (مانند جمع کردن یک ماتریس به یک بردار) استفاده شود.

عملوند اضافی broadcast_dimensions برشی از اعداد صحیح است که ابعاد مورد استفاده برای پخش عملوندها را مشخص میکند. معانی آن به تفصیل در صفحه پخش توضیح داده شده است.

برای اطلاعات StableHLO به StableHLO - add مراجعه کنید.

افزودن وابستگی

همچنین HloInstruction::AddDependency مراجعه کنید.

AddDependency ممکن است در دامپهای HLO ظاهر شود، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

بالاخره

همچنین به XlaBuilder::AfterAll مراجعه کنید.

AfterAll تعداد متغیری از توکنها را میگیرد و یک توکن واحد تولید میکند. توکنها انواع اولیهای هستند که میتوانند بین عملیات جانبی برای اعمال ترتیب، رشتهبندی شوند. AfterAll میتواند به عنوان اتصال توکنها برای ترتیب یک عملیات پس از مجموعهای از عملیاتها استفاده شود.

AfterAll(tokens)

| استدلالها | نوع | معناشناسی |

|---|---|---|

tokens | بردار XlaOp | تعداد متغیر توکنها |

برای اطلاعات StableHLO به StableHLO - after_all مراجعه کنید.

آلگَتِر

همچنین به XlaBuilder::AllGather مراجعه کنید.

عملیات الحاق را در بین کپیها انجام میدهد.

AllGather(operand, all_gather_dimension, shard_count, replica_groups, channel_id, layout, use_global_device_ids)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایهای برای الحاق در سراسر کپیها |

all_gather_dimension | int64 | بُعد الحاق |

shard_count | int64 | اندازه هر گروه کپی |

replica_groups | بردار بردارهای int64 | گروههایی که الحاق بین آنها انجام میشود |

channel_id | ChannelHandle اختیاری | شناسه کانال اختیاری برای ارتباط بین ماژولها |

layout | Layout اختیاری | یک الگوی طرحبندی ایجاد میکند که طرحبندی منطبق در آرگومان را ثبت میکند. |

use_global_device_ids | bool اختیاری | اگر شناسههای موجود در پیکربندی ReplicaGroup نشاندهندهی یک شناسهی سراسری باشند، مقدار true را برمیگرداند. |

-

replica_groupsفهرستی از گروههای کپی است که الحاق بین آنها انجام میشود (شناسه کپی برای کپی فعلی را میتوان با استفاده ازReplicaIdبازیابی کرد). ترتیب کپیها در هر گروه، ترتیب قرارگیری ورودیهای آنها در نتیجه را تعیین میکند.replica_groupsیا باید خالی باشد (که در این صورت همه کپیها به یک گروه واحد تعلق دارند که از0تاN - 1مرتب شدهاند)، یا باید شامل تعداد عناصر یکسانی با تعداد کپیها باشد. به عنوان مثال،replica_groups = {0, 2}, {1, 3}الحاق بین کپیهای0و2و1و3را انجام میدهد. -

shard_countاندازه هر گروه replica است. ما در مواردی کهreplica_groupsخالی است به این نیاز داریم. -

channel_idبرای ارتباط بین ماژولها استفاده میشود: فقط عملیاتهایall-gatherباchannel_idیکسان میتوانند با یکدیگر ارتباط برقرار کنند. - اگر شناسههای موجود در پیکربندی ReplicaGroup به جای شناسه replica، نشاندهنده یک شناسه سراسری (replica_id * partition_count + partition_id) باشند، مقدار true را برمیگرداند. این

use_global_device_idsگروهبندی انعطافپذیرتر دستگاهها را در صورتی که این all-reduce هم به صورت cross-partition و هم به صورت cross-replica باشد، امکانپذیر میسازد.

شکل خروجی، شکل ورودی است که all_gather_dimension آن shard_count چند برابر بزرگتر کرده است. برای مثال، اگر دو کپی وجود داشته باشد و عملوند به ترتیب مقدار [1.0, 2.5] و [3.0, 5.25] را در دو کپی داشته باشد، آنگاه مقدار خروجی از این عملیات که all_gather_dim برابر با 0 است، در هر دو کپی [1.0, 2.5, 3.0,5.25] خواهد بود.

رابط برنامهنویسی کاربردی (API) مربوط به AllGather به صورت داخلی به دو دستورالعمل HLO ( AllGatherStart و AllGatherDone ) تجزیه شده است.

همچنین HloInstruction::CreateAllGatherStart مراجعه کنید.

AllGatherStart و AllGatherDone به عنوان مقادیر اولیه در HLO عمل میکنند. این عملیات ممکن است در فایلهای HLO ظاهر شوند، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

برای اطلاعات StableHLO به StableHLO - all_gather مراجعه کنید.

همهکاهش

همچنین به XlaBuilder::AllReduce مراجعه کنید.

یک محاسبه سفارشی را در سراسر کپیها انجام میدهد.

AllReduce(operand, computation, replica_groups, channel_id, shape_with_layout, use_global_device_ids)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایه یا یک تاپل غیر تهی از آرایهها برای کاهش در کپیها |

computation | XlaComputation | محاسبه کاهش |

replica_groups | بردار ReplicaGroup | گروههایی که کاهشها بین آنها انجام میشود |

channel_id | ChannelHandle اختیاری | شناسه کانال اختیاری برای ارتباط بین ماژولها |

shape_with_layout | Shape اختیاری | طرحبندی دادههای منتقلشده را تعریف میکند |

use_global_device_ids | bool اختیاری | اگر شناسههای موجود در پیکربندی ReplicaGroup نشاندهندهی یک شناسهی سراسری باشند، مقدار true را برمیگرداند. |

- وقتی

operandیک چندتایی از آرایهها باشد، تمام کاهش روی هر عنصر چندتایی انجام میشود. -

replica_groupsفهرستی از گروههای کپی است که کاهش بین آنها انجام میشود (شناسه کپی برای کپی فعلی را میتوان با استفاده ازReplicaIdبازیابی کرد).replica_groupsیا باید خالی باشد (که در این صورت همه کپیها به یک گروه واحد تعلق دارند)، یا باید شامل تعداد عناصر یکسانی با تعداد کپیها باشد. به عنوان مثال،replica_groups = {0, 2}, {1, 3}کاهش را بین کپیهای0و2و1و3انجام میدهد. -

channel_idبرای ارتباط بین ماژولها استفاده میشود: فقط عملیاتهایall-reduceباchannel_idیکسان میتوانند با یکدیگر ارتباط برقرار کنند. -

shape_with_layout: طرحبندی AllReduce را به طرحبندی داده شده مجبور میکند. این برای تضمین طرحبندی یکسان برای گروهی از عملیات AllReduce که به طور جداگانه کامپایل شدهاند، استفاده میشود. - اگر شناسههای موجود در پیکربندی ReplicaGroup به جای شناسه replica، نشاندهنده یک شناسه سراسری (replica_id * partition_count + partition_id) باشند، مقدار true را برمیگرداند. این

use_global_device_idsگروهبندی انعطافپذیرتر دستگاهها را در صورتی که این all-reduce هم به صورت cross-partition و هم به صورت cross-replica باشد، امکانپذیر میسازد.

شکل خروجی همان شکل ورودی است. برای مثال، اگر دو کپی وجود داشته باشد و عملوند به ترتیب مقدار [1.0, 2.5] و [3.0, 5.25] را در دو کپی داشته باشد، مقدار خروجی حاصل از این محاسبه عملیات و جمع در هر دو کپی [4.0, 7.75] خواهد بود. اگر ورودی یک چندتایی باشد، خروجی نیز یک چندتایی است.

محاسبه نتیجه AllReduce نیاز به داشتن یک ورودی از هر کپی دارد، بنابراین اگر یک کپی، گره AllReduce را بیشتر از دیگری اجرا کند، کپی قبلی برای همیشه منتظر خواهد ماند. از آنجایی که کپیها همه یک برنامه را اجرا میکنند، راههای زیادی برای وقوع این اتفاق وجود ندارد، اما زمانی که شرط یک حلقه while به دادههای infeed بستگی دارد و دادههای infeed باعث میشوند که حلقه while تعداد دفعات بیشتری روی یک کپی نسبت به دیگری تکرار شود، این امر امکانپذیر است.

رابط برنامهنویسی کاربردی AllReduce به صورت داخلی به دو دستورالعمل HLO ( AllReduceStart و AllReduceDone ) تجزیه شده است.

همچنین HloInstruction::CreateAllReduceStart مراجعه کنید.

AllReduceStart و AllReduceDone به عنوان مقادیر اولیه در HLO عمل میکنند. این عملیات ممکن است در فایلهای HLO ظاهر شوند، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

CrossReplicaSum

همچنین به XlaBuilder::CrossReplicaSum مراجعه کنید.

AllReduce با محاسبه جمع انجام میدهد.

CrossReplicaSum(operand, replica_groups)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | ایکس لا اوپ | آرایه یا یک تاپل غیر تهی از آرایهها برای کاهش در کپیها |

replica_groups | بردار بردارهای int64 | گروههایی که کاهشها بین آنها انجام میشود |

مجموع مقدار عملوند را در هر زیرگروه از کپیها برمیگرداند. همه کپیها یک ورودی به مجموع میدهند و همه کپیها مجموع حاصل را برای هر زیرگروه دریافت میکنند.

همه به همه

همچنین به XlaBuilder::AllToAll مراجعه کنید.

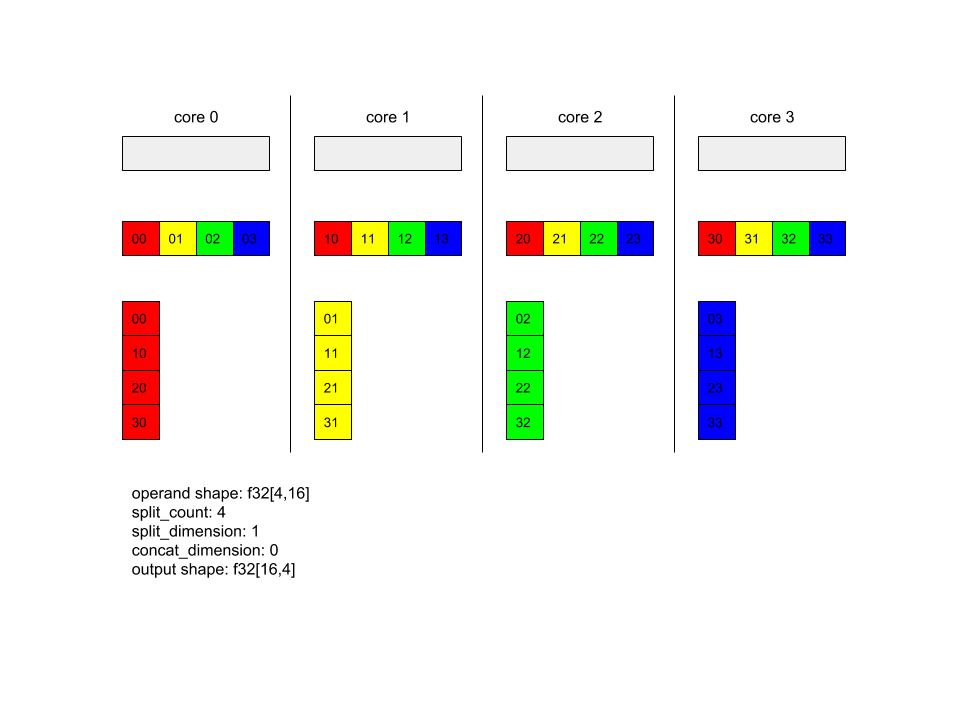

AllToAll یک عملیات جمعی است که دادهها را از همه هستهها به همه هستهها ارسال میکند. این عملیات دو مرحله دارد:

- مرحله پراکندگی. در هر هسته، عملوند به تعداد بلوکهای

split_countدر امتدادsplit_dimensionsتقسیم میشود و بلوکها به تمام هستهها پراکنده میشوند، مثلاً بلوک iام به هسته iام ارسال میشود. - مرحله جمعآوری. هر هسته بلوکهای دریافتی را در امتداد

concat_dimensionبه هم متصل میکند.

هستههای شرکتکننده را میتوان با موارد زیر پیکربندی کرد:

-

replica_groups: هر ReplicaGroup شامل لیستی از شناسههای کپی است که در محاسبه شرکت میکنند (شناسه کپی برای کپی فعلی را میتوان با استفاده ازReplicaIdبازیابی کرد). AllToAll در زیرگروهها به ترتیب مشخص شده اعمال خواهد شد. به عنوان مثال،replica_groups = { {1,2,3}, {4,5,0} }به این معنی است که یک AllToAll در کپیهای{1, 2, 3}و در مرحله جمعآوری اعمال میشود و بلوکهای دریافتی به همان ترتیب 1، 2، 3 به هم متصل میشوند. سپس، AllToAll دیگری در کپیهای 4، 5، 0 اعمال میشود و ترتیب الحاق نیز 4، 5، 0 است. اگرreplica_groupsخالی باشد، همه کپیها به یک گروه تعلق دارند، به ترتیب الحاق ظاهر شدنشان.

پیشنیازها:

- اندازه بُعد عملوند روی

split_dimensionبرsplit_countبخشپذیر است. - شکل عملوند چندتایی نیست.

AllToAll(operand, split_dimension, concat_dimension, split_count, replica_groups, layout, channel_id)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایه ورودی n بعدی |

split_dimension | int64 | مقداری در بازه [0,n) که نام بُعدی است که عملوند در امتداد آن تقسیم میشود |

concat_dimension | int64 | مقداری در بازه [0,n) که بُعدی را که بلوکهای تقسیمشده در امتداد آن به هم متصل میشوند، نامگذاری میکند. |

split_count | int64 | تعداد هستههایی که در این عملیات شرکت میکنند. اگر replica_groups خالی باشد، این باید تعداد کپیها باشد؛ در غیر این صورت، این باید برابر با تعداد کپیها در هر گروه باشد. |

replica_groups | بردار ReplicaGroup | هر گروه شامل لیستی از شناسههای کپی است. |

layout | Layout اختیاری | طرح حافظه مشخص شده توسط کاربر |

channel_id | ChannelHandle اختیاری | شناسه منحصر به فرد برای هر جفت ارسال/دریافت |

برای اطلاعات بیشتر در مورد شکلها و طرحبندیها، به xla::shapes مراجعه کنید.

برای اطلاعات StableHLO به StableHLO - all_to_all مراجعه کنید.

همه به همه - مثال ۱.

XlaBuilder b("alltoall");

auto x = Parameter(&b, 0, ShapeUtil::MakeShape(F32, {4, 16}), "x");

AllToAll(

x,

/*split_dimension=*/ 1,

/*concat_dimension=*/ 0,

/*split_count=*/ 4);

در مثال بالا، 4 هسته در Alltoall شرکت دارند. در هر هسته، عملوند به 4 قسمت در امتداد بُعد 1 تقسیم میشود، بنابراین هر قسمت شکل f32[4,4] را دارد. 4 قسمت در تمام هستهها پراکنده میشوند. سپس هر هسته، قسمتهای دریافتی را در امتداد بُعد 0، به ترتیب از هسته 0 تا 4، به هم متصل میکند. بنابراین خروجی در هر هسته شکل f32[16,4] را دارد.

AllToAll - مثال 2 - StableHLO

در مثال بالا، 2 کپی در AllToAll شرکت دارند. در هر کپی، عملوند به شکل f32[2,4] است. عملوند در امتداد بُعد 1 به 2 قسمت تقسیم میشود، بنابراین هر قسمت شکل f32[2,2] را دارد. سپس این 2 قسمت بر اساس موقعیتشان در گروه کپی، بین کپیها جابجا میشوند. هر کپی، قسمت مربوط به خود را از هر دو عملوند جمعآوری کرده و آنها را در امتداد بُعد 0 به هم متصل میکند. در نتیجه، خروجی در هر کپی به شکل f32[4,2] است.

همه چیز به همه

همچنین به XlaBuilder::RaggedAllToAll مراجعه کنید.

RaggedAllToAll یک عملیات همه به همه را به صورت جمعی انجام میدهد، که در آن ورودی و خروجی، تانسورهای ناهموار هستند.

RaggedAllToAll(input, input_offsets, send_sizes, output, output_offsets, recv_sizes, replica_groups, channel_id)

| استدلالها | نوع | معناشناسی |

|---|---|---|

input | XlaOp | N آرایه از نوع T |

input_offsets | XlaOp | N آرایه از نوع T |

send_sizes | XlaOp | N آرایه از نوع T |

output | XlaOp | N آرایه از نوع T |

output_offsets | XlaOp | N آرایه از نوع T |

recv_sizes | XlaOp | N آرایه از نوع T |

replica_groups | بردار ReplicaGroup | هر گروه شامل لیستی از شناسههای کپی است. |

channel_id | ChannelHandle اختیاری | شناسه منحصر به فرد برای هر جفت ارسال/دریافت |

تانسورهای ناهموار توسط مجموعهای از سه تانسور تعریف میشوند:

-

data: تانسورdataدر امتداد بیرونیترین بُعد خود «ناهموار» شده است، که در امتداد آن هر عنصر اندیسگذاری شده اندازه متغیری دارد. -

offsets': تانسورoffsets، بیرونیترین بُعد تانسورdataرا اندیسگذاری میکند و نشاندهندهی آفست شروع هر عنصر ناهموار تانسورdataاست. -

sizes: تانسورsizesنشاندهنده اندازه هر عنصر ناهموار از تانسورdataاست، که در آن اندازه بر حسب واحدهای زیرعناصر مشخص میشود. یک زیرعنصر به عنوان پسوند شکل تانسور «داده» که با حذف بیرونیترین بُعد «ناهموار» به دست میآید، تعریف میشود. - تانسورهای

offsetsوsizesباید اندازه یکسانی داشته باشند.

یک نمونه از تانسور ناهموار:

data: [8,3] =

{ {a,b,c},{d,e,f},{g,h,i},{j,k,l},{m,n,o},{p,q,r},{s,t,u},{v,w,x} }

offsets: [3] = {0, 1, 4}

sizes: [3] = {1, 3, 4}

// Index 'data' at 'offsets'[0], 'sizes'[0]' // {a,b,c}

// Index 'data' at 'offsets'[1], 'sizes'[1]' // {d,e,f},{g,h,i},{j,k,l}

// Index 'data' at 'offsets'[2], 'sizes'[2]' // {m,n,o},{p,q,r},{s,t,u},{v,w,x}

output_offsets باید به گونهای تقسیمبندی شوند که هر کپی، در دیدگاه خروجی کپی هدف، دارای آفست باشد.

برای i امین آفست خروجی، کپی فعلی، بهروزرسانی input[input_offsets[i]:input_offsets[i]+send_sizes[i]] را به کپی i ام ارسال میکند که در output_i[output_offsets[i]:output_offsets[i]+send_sizes[i]] در output کپی i ام نوشته خواهد شد.

برای مثال، اگر دو کپی داشته باشیم:

replica 0:

input: [1, 2, 2]

output:[0, 0, 0, 0]

input_offsets: [0, 1]

send_sizes: [1, 2]

output_offsets: [0, 0]

recv_sizes: [1, 1]

replica 1:

input: [3, 4, 0]

output: [0, 0, 0, 0]

input_offsets: [0, 1]

send_sizes: [1, 1]

output_offsets: [1, 2]

recv_sizes: [2, 1]

// replica 0's result will be: [1, 3, 0, 0]

// replica 1's result will be: [2, 2, 4, 0]

HLO نامرتب و همهکاره، استدلالهای زیر را دارد:

-

input: تانسور داده ورودی ناهموار. -

output: تانسور داده خروجی ناهموار. -

input_offsets: تانسور آفستهای ورودی ناهموار. -

send_sizes: تانسور اندازههای ارسال نامنظم. -

output_offsets: آرایهای از آفستهای ناهموار در خروجی کپی هدف. -

recv_sizes: تانسور اندازههای recv ناهموار.

تانسورهای *_offsets و *_sizes باید همگی شکل یکسانی داشته باشند.

دو شکل برای تانسورهای *_offsets و *_sizes پشتیبانی میشوند:

-

[num_devices]که در آن ragged-all-to-all میتواند حداکثر یک بهروزرسانی را به هر دستگاه از راه دور در گروه replica ارسال کند. برای مثال:

for (remote_device_id : replica_group) {

SEND input[input_offsets[remote_device_id]],

output[output_offsets[remote_device_id]],

send_sizes[remote_device_id] }

[num_devices, num_updates]که در آن ragged-all-to-all میتواند بهروزرسانیهایnum_updatesرا برای هر دستگاه راه دور در گروه replica تا همان دستگاه راه دور (هر کدام با آفستهای مختلف) ارسال کند.

برای مثال:

for (remote_device_id : replica_group) {

for (update_idx : num_updates) {

SEND input[input_offsets[remote_device_id][update_idx]],

output[output_offsets[remote_device_id][update_idx]]],

send_sizes[remote_device_id][update_idx] } }

و

همچنین XlaBuilder::And را ببینید.

عمل AND را به صورت عنصر به عنصر روی دو تانسور lhs و rhs انجام میدهد.

And(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

شکل آرگومانها باید یا مشابه باشند یا سازگار. برای آشنایی با مفهوم سازگار بودن شکلها، به مستندات پخش مراجعه کنید. نتیجه یک عملیات، شکلی دارد که حاصل پخش دو آرایه ورودی است. در این نوع، عملیات بین آرایههایی با رتبههای مختلف پشتیبانی نمیشود ، مگر اینکه یکی از عملوندها اسکالر باشد.

یک نوع جایگزین با پشتیبانی از پخش چندبعدی برای And وجود دارد:

And(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| بُعد_پخش | آرایه برش | هر بُعد از شکل عملوند با کدام بُعد در شکل هدف مطابقت دارد؟ |

این نوع عملیات باید برای عملیات حسابی بین آرایههایی با رتبههای مختلف (مانند جمع کردن یک ماتریس به یک بردار) استفاده شود.

عملوند اضافی broadcast_dimensions برشی از اعداد صحیح است که ابعاد مورد استفاده برای پخش عملوندها را مشخص میکند. معانی آن به تفصیل در صفحه پخش توضیح داده شده است.

برای اطلاعات StableHLO به StableHLO - و مراجعه کنید.

ناهمگامسازی

همچنین HloInstruction::CreateAsyncStart ، HloInstruction::CreateAsyncUpdate ، HloInstruction::CreateAsyncDone مراجعه کنید.

AsyncDone ، AsyncStart و AsyncUpdate دستورالعملهای داخلی HLO هستند که برای عملیات ناهمزمان استفاده میشوند و به عنوان مقادیر اولیه در HLO عمل میکنند. این عملیات ممکن است در فایلهای HLO ظاهر شوند، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

آتان۲

همچنین به XlaBuilder::Atan2 مراجعه کنید.

عملیات atan2 را بر اساس عنصر روی lhs و rhs انجام میدهد.

Atan2(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

شکل آرگومانها باید یا مشابه باشند یا سازگار. برای آشنایی با مفهوم سازگار بودن شکلها، به مستندات پخش مراجعه کنید. نتیجه یک عملیات، شکلی دارد که حاصل پخش دو آرایه ورودی است. در این نوع، عملیات بین آرایههایی با رتبههای مختلف پشتیبانی نمیشود ، مگر اینکه یکی از عملوندها اسکالر باشد.

یک نوع جایگزین با پشتیبانی از پخش چندبعدی برای Atan2 وجود دارد:

Atan2(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| بُعد_پخش | آرایه برش | هر بُعد از شکل عملوند با کدام بُعد در شکل هدف مطابقت دارد؟ |

این نوع عملیات باید برای عملیات حسابی بین آرایههایی با رتبههای مختلف (مانند جمع کردن یک ماتریس به یک بردار) استفاده شود.

عملوند اضافی broadcast_dimensions برشی از اعداد صحیح است که ابعاد مورد استفاده برای پخش عملوندها را مشخص میکند. معانی آن به تفصیل در صفحه پخش توضیح داده شده است.

برای اطلاعات StableHLO به StableHLO - atan2 مراجعه کنید.

BatchNormGrad

همچنین برای شرح مفصلی از الگوریتم، به XlaBuilder::BatchNormGrad و مقاله اصلی نرمالسازی دستهای مراجعه کنید.

گرادیانهای نرم دستهای را محاسبه میکند.

BatchNormGrad(operand, scale, batch_mean, batch_var, grad_output, epsilon, feature_index)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | ایکس لا اوپ | آرایه n بعدی که قرار است نرمالسازی شود (x) |

scale | ایکس لا اوپ | آرایه تک بعدی (\(\gamma\)) |

batch_mean | ایکس لا اوپ | آرایه تک بعدی (\(\mu\)) |

batch_var | ایکس لا اوپ | آرایه تک بعدی (\(\sigma^2\)) |

grad_output | ایکس لا اوپ | گرادیانهای ارسالی به BatchNormTraining (\(\nabla y\)) |

epsilon | float | مقدار اپسیلون (\(\epsilon\)) |

feature_index | int64 | اندیس گذاری برای بُعد ویژگی در operand |

برای هر ویژگی در بُعد ویژگی ( feature_index شاخص بُعد ویژگی در operand است)، این عملیات گرادیانها را نسبت به operand ، offset و scale در تمام ابعاد دیگر محاسبه میکند. feature_index باید یک شاخص معتبر برای بُعد ویژگی در operand باشد.

سه گرادیان با فرمولهای زیر تعریف میشوند (با فرض یک آرایه ۴ بعدی به عنوان operand و با شاخص بعد ویژگی l ، اندازه دسته m و اندازههای مکانی w و h ):

\[ \begin{split} c_l&= \frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \left( \nabla y_{ijkl} \frac{x_{ijkl} - \mu_l}{\sigma^2_l+\epsilon} \right) \\\\ d_l&= \frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \nabla y_{ijkl} \\\\ \nabla x_{ijkl} &= \frac{\gamma_{l} }{\sqrt{\sigma^2_{l}+\epsilon} } \left( \nabla y_{ijkl} - d_l - c_l (x_{ijkl} - \mu_{l}) \right) \\\\ \nabla \gamma_l &= \sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \left( \nabla y_{ijkl} \frac{x_{ijkl} - \mu_l}{\sqrt{\sigma^2_{l}+\epsilon} } \right) \\\\\ \nabla \beta_l &= \sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h \nabla y_{ijkl} \end{split} \]

ورودیهای batch_mean و batch_var نشاندهنده مقادیر گشتاورها در ابعاد دستهای و مکانی هستند.

نوع خروجی یک تاپل از سه هندل است:

| خروجیها | نوع | معناشناسی |

|---|---|---|

grad_operand | ایکس لا اوپ | گرادیان نسبت به operand ورودی (\(\nabla x\)) |

grad_scale | ایکس لا اوپ | گرادیان نسبت به ورودی ** scale ** (\(\nabla\gamma\)) |

grad_offset | ایکس لا اوپ | گرادیان نسبت به offset ورودی (\(\nabla\beta\)) |

برای اطلاعات StableHLO به StableHLO - batch_norm_grad مراجعه کنید.

استنتاج BatchNorm

همچنین برای شرح مفصلی از الگوریتم، به XlaBuilder::BatchNormInference و مقاله اصلی نرمالسازی دستهای مراجعه کنید.

یک آرایه را در ابعاد دستهای و مکانی نرمالسازی میکند.

BatchNormInference(operand, scale, offset, mean, variance, epsilon, feature_index)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | ایکس لا اوپ | آرایه n بعدی که قرار است نرمالسازی شود |

scale | ایکس لا اوپ | آرایه تک بعدی |

offset | ایکس لا اوپ | آرایه تک بعدی |

mean | ایکس لا اوپ | آرایه تک بعدی |

variance | ایکس لا اوپ | آرایه تک بعدی |

epsilon | float | مقدار اپسیلون |

feature_index | int64 | اندیس گذاری برای بُعد ویژگی در operand |

برای هر ویژگی در بُعد ویژگی ( feature_index شاخصی برای بُعد ویژگی در operand است)، این عملیات میانگین و واریانس را در تمام ابعاد دیگر محاسبه میکند و از میانگین و واریانس برای نرمالسازی هر عنصر در operand استفاده میکند. feature_index باید یک شاخص معتبر برای بُعد ویژگی در operand باشد.

BatchNormInference معادل فراخوانی BatchNormTraining بدون محاسبه mean و variance برای هر دسته است. در این روش از mean و variance ورودی به عنوان مقادیر تخمینی استفاده میشود. هدف از این عملیات کاهش تأخیر در استنتاج است، از این رو BatchNormInference نام دارد.

خروجی یک آرایه n بعدی و نرمال شده با شکلی مشابه operand ورودی است.

برای اطلاعات StableHLO به StableHLO - batch_norm_inference مراجعه کنید.

آموزش BatchNorm

همچنین برای شرح مفصلی از الگوریتم، به XlaBuilder::BatchNormTraining و the original batch normalization paper مراجعه کنید.

یک آرایه را در ابعاد دستهای و مکانی نرمالسازی میکند.

BatchNormTraining(operand, scale, offset, epsilon, feature_index)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایه n بعدی که قرار است نرمالسازی شود (x) |

scale | XlaOp | آرایه تک بعدی (\(\gamma\)) |

offset | XlaOp | آرایه تک بعدی (\(\beta\)) |

epsilon | float | مقدار اپسیلون (\(\epsilon\)) |

feature_index | int64 | اندیس گذاری برای بُعد ویژگی در operand |

برای هر ویژگی در بُعد ویژگی ( feature_index شاخصی برای بُعد ویژگی در operand است)، این عملیات میانگین و واریانس را در تمام ابعاد دیگر محاسبه میکند و از میانگین و واریانس برای نرمالسازی هر عنصر در operand استفاده میکند. feature_index باید یک شاخص معتبر برای بُعد ویژگی در operand باشد.

الگوریتم برای هر دسته در operand به شرح زیر است \(x\) که شامل m عنصر با w و h به عنوان اندازه ابعاد مکانی است (با فرض اینکه operand یک آرایه ۴ بعدی است):

میانگین دستهای را محاسبه میکند \(\mu_l\) برای هر ویژگی

lدر بُعد ویژگی:\(\mu_l=\frac{1}{mwh}\sum_{i=1}^m\sum_{j=1}^w\sum_{k=1}^h x_{ijkl}\)واریانس دستهای را محاسبه میکند \(\sigma^2_l\): $\sigma^2 l=\frac{1}{mwh}\sum {i=1}^m\sum {j=1}^w\sum {k=1}^h (x_{ijkl} - \mu_l)^2$

نرمالسازی، مقیاسبندی و تغییر مکان:\(y_{ijkl}=\frac{\gamma_l(x_{ijkl}-\mu_l)}{\sqrt[2]{\sigma^2_l+\epsilon} }+\beta_l\)

مقدار اپسیلون، که معمولاً عدد کوچکی است، برای جلوگیری از خطاهای تقسیم بر صفر اضافه میشود.

نوع خروجی یک چندتایی از سه XlaOp است:

| خروجیها | نوع | معناشناسی |

|---|---|---|

output | XlaOp | آرایه n بعدی با شکل مشابه operand ورودی (y) |

batch_mean | XlaOp | آرایه تک بعدی (\(\mu\)) |

batch_var | XlaOp | آرایه تک بعدی (\(\sigma^2\)) |

batch_mean و batch_var گشتاورهایی هستند که با استفاده از فرمولهای بالا در ابعاد دستهای و مکانی محاسبه میشوند.

برای اطلاعات StableHLO به StableHLO - batch_norm_training مراجعه کنید.

بیتکست

همچنین HloInstruction::CreateBitcast مراجعه کنید.

ممکن است Bitcast در فایلهای HLO ظاهر شود، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

نوع تبدیل بیتکست

همچنین به XlaBuilder::BitcastConvertType مراجعه کنید.

مشابه tf.bitcast در TensorFlow، عملیات تبدیل بیت به بیت را بر اساس عنصر از یک شکل داده به شکل هدف انجام میدهد. اندازه ورودی و خروجی باید مطابقت داشته باشند: به عنوان مثال، عناصر s32 از طریق روال تبدیل بیت به عناصر f32 تبدیل میشوند و یک عنصر s32 به چهار عنصر s8 تبدیل میشود. تبدیل بیت به عنوان یک تبدیل سطح پایین پیادهسازی میشود، بنابراین ماشینهایی با نمایشهای مختلف ممیز شناور، نتایج متفاوتی خواهند داد.

BitcastConvertType(operand, new_element_type)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایهای از نوع T با تیرههای D |

new_element_type | PrimitiveType | نوع U |

ابعاد عملوند و شکل هدف باید با هم مطابقت داشته باشند، به جز آخرین بُعد که به نسبت اندازه اولیه قبل و بعد از تبدیل تغییر خواهد کرد.

نوع عناصر منبع و مقصد نباید چندتایی باشند.

برای اطلاعات StableHLO به StableHLO - bitcast_convert مراجعه کنید.

تبدیل بیتکست به نوع اولیه با عرض متفاوت

دستورالعمل BitcastConvert HLO از حالتی پشتیبانی میکند که اندازه عنصر خروجی از نوع T' با اندازه عنصر ورودی T برابر نباشد. از آنجایی که کل عملیات از نظر مفهومی یک bitcast است و بایتهای زیرین را تغییر نمیدهد، شکل عنصر خروجی باید تغییر کند. برای B = sizeof(T), B' = sizeof(T') ، دو حالت ممکن وجود دارد.

اول، وقتی B > B' باشد، شکل خروجی یک بُعد فرعی جدید با اندازه B/B' میگیرد. برای مثال:

f16[10,2]{1,0} %output = f16[10,2]{1,0} bitcast-convert(f32[10]{0} %input)

این قانون برای اسکالرهای مؤثر یکسان است:

f16[2]{0} %output = f16[2]{0} bitcast-convert(f32[] %input)

از طرف دیگر، برای B' > B دستورالعمل نیاز دارد که آخرین بُعد منطقی شکل ورودی برابر با B'/B باشد و این بُعد در طول تبدیل حذف میشود:

f32[10]{0} %output = f32[10]{0} bitcast-convert(f16[10,2]{1,0} %input)

توجه داشته باشید که تبدیل بین پهنای بیتهای مختلف به صورت عنصری انجام نمیشود.

پخش

همچنین به XlaBuilder::Broadcast مراجعه کنید.

با کپی کردن دادهها در آرایه، ابعاد آن را افزایش میدهد.

Broadcast(operand, broadcast_sizes)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایهای که قرار است کپی شود |

broadcast_sizes | ArraySlice<int64> | اندازههای ابعاد جدید |

ابعاد جدید در سمت چپ درج میشوند، یعنی اگر broadcast_sizes دارای مقادیر {a0, ..., aN} باشد و شکل عملوند دارای ابعاد {b0, ..., bM} باشد، آنگاه شکل خروجی دارای ابعاد {a0, ..., aN, b0, ..., bM} خواهد بود.

ابعاد جدید به کپیهایی از عملوند، یعنی

output[i0, ..., iN, j0, ..., jM] = operand[j0, ..., jM]

برای مثال، اگر operand یک اسکالر f32 با مقدار 2.0f باشد و broadcast_sizes برابر با {2, 3} باشد، نتیجه یک آرایه با شکل f32[2, 3] خواهد بود و تمام مقادیر موجود در نتیجه 2.0f خواهند بود.

برای اطلاعات StableHLO به StableHLO - broadcast مراجعه کنید.

پخشدردیم

همچنین به XlaBuilder::BroadcastInDim مراجعه کنید.

با کپی کردن دادهها در آرایه، اندازه و تعداد ابعاد آن را افزایش میدهد.

BroadcastInDim(operand, out_dim_size, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایهای که قرار است کپی شود |

out_dim_size | ArraySlice<int64> | اندازه ابعاد شکل هدف |

broadcast_dimensions | ArraySlice<int64> | هر بُعد از شکل عملوند با کدام بُعد در شکل هدف مطابقت دارد؟ |

مشابه Broadcast است، اما امکان اضافه کردن ابعاد در هر جایی و گسترش ابعاد موجود با اندازه ۱ را فراهم میکند.

operand به شکلی که توسط out_dim_size توصیف شده است، پخش میشود. broadcast_dimensions ابعاد operand را به ابعاد شکل هدف نگاشت میکند، یعنی بعد iام عملوند به بعد broadcast_dimension[i]ام شکل خروجی نگاشت میشود. ابعاد operand باید اندازه ۱ داشته باشند یا به اندازه ابعادی در شکل خروجی که به آن نگاشت شدهاند، باشند. ابعاد باقیمانده با ابعادی با اندازه ۱ پر میشوند. سپس پخش با ابعاد منحط در امتداد این ابعاد منحط پخش میشود تا به شکل خروجی برسد. معانی به تفصیل در صفحه پخش شرح داده شده است.

تماس بگیرید

همچنین به XlaBuilder::Call مراجعه کنید.

با آرگومانهای داده شده، یک محاسبه را فراخوانی میکند.

Call(computation, operands...)

| استدلالها | نوع | معناشناسی |

|---|---|---|

computation | XlaComputation | محاسبه نوع T_0, T_1, ..., T_{N-1} -> S با N پارامتر از نوع دلخواه |

operands | توالی N XlaOp | N آرگومان از نوع دلخواه |

تعداد و نوع operands باید با پارامترهای computation مطابقت داشته باشد. مجاز است که هیچ operands نداشته باشد.

تماس ترکیبی

همچنین به XlaBuilder::CompositeCall مراجعه کنید.

عملیاتی را که از سایر عملیات StableHLO تشکیل شده است (مرکب شده) کپسوله میکند، ورودیها و composite_attributes را میگیرد و نتایج را تولید میکند. معنای op توسط ویژگی تجزیه پیادهسازی میشود. op ترکیبی را میتوان بدون تغییر معنای برنامه با تجزیه آن جایگزین کرد. در مواردی که inline کردن decomposition معنای op یکسانی را ارائه نمیدهد، استفاده از custom_call را ترجیح دهید.

فیلد نسخه (که مقدار پیشفرض آن 0 است) برای نشان دادن زمان تغییر معنای یک ترکیب استفاده میشود.

این عملیات به صورت یک kCall با ویژگی is_composite=true پیادهسازی شده است. فیلد decomposition توسط ویژگی computation مشخص میشود. ویژگیهای frontend، ویژگیهای باقیمانده را که با composite.

مثالی از عملیات CompositeCall:

f32[] call(f32[] %cst), to_apply=%computation, is_composite=true,

frontend_attributes = {

composite.name="foo.bar",

composite.attributes={n = 1 : i32, tensor = dense<1> : tensor<i32>},

composite.version="1"

}

CompositeCall(computation, operands..., name, attributes, version)

| استدلالها | نوع | معناشناسی |

|---|---|---|

computation | XlaComputation | محاسبه نوع T_0, T_1, ..., T_{N-1} -> S با N پارامتر از نوع دلخواه |

operands | توالی N XlaOp | تعداد متغیر مقادیر |

name | string | نام کامپوزیت |

attributes | string اختیاری | دیکشنری رشتهای اختیاری از ویژگیها |

version | اختیاری int64 | شماره به نسخه، بهروزرسانیها به معناشناسی عملیات مرکب |

decomposition یک عملیات، فیلدی به نام نیست، بلکه به صورت یک ویژگی to_apply ظاهر میشود که به تابعی اشاره میکند که شامل پیادهسازی سطح پایینتر است، یعنی to_apply=%funcname

اطلاعات بیشتر در مورد ترکیب و تجزیه را میتوانید در StableHLO Specification بیابید.

سیبیآرتی

همچنین به XlaBuilder::Cbrt مراجعه کنید.

عملیات ریشه سوم به صورت عنصر به عنصر x -> cbrt(x) .

Cbrt(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

Cbrt همچنین از آرگومان اختیاری result_accuracy پشتیبانی میکند:

Cbrt(operand, result_accuracy)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

result_accuracy | ResultAccuracy اختیاری | انواع دقتی که کاربر میتواند برای عملیاتهای تکی با پیادهسازیهای متعدد درخواست کند |

برای اطلاعات بیشتر در مورد result_accuracy به بخش Result Accuracy مراجعه کنید.

برای اطلاعات StableHLO به StableHLO - cbrt مراجعه کنید.

سقف

همچنین به XlaBuilder::Ceil مراجعه کنید.

سقف عنصر-محور x -> ⌈x⌉ .

Ceil(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

برای اطلاعات StableHLO به StableHLO - ceil مراجعه کنید.

چولسکی

همچنین به XlaBuilder::Cholesky مراجعه کنید.

تجزیه چولسکی دستهای از ماتریسهای متقارن (هرمیتی) مثبت معین را محاسبه میکند.

Cholesky(a, lower)

| استدلالها | نوع | معناشناسی |

|---|---|---|

a | XlaOp | آرایهای از نوع مختلط یا ممیز شناور با ابعاد > ۲. |

lower | bool | اینکه آیا از مثلث بالایی یا پایینی a استفاده شود. |

اگر lower true باشد، ماتریسهای پایین-مثلثی l را طوری محاسبه میکند که $a = l. l^T$. اگر lower false باشد، ماتریسهای بالا-مثلثی u طوری محاسبه میکند که\(a = u^T . u\).

دادههای ورودی فقط از مثلث پایینی/بالایی a خوانده میشوند، که به مقدار lower بستگی دارد. مقادیر مثلث دیگر نادیده گرفته میشوند. دادههای خروجی در همان مثلث بازگردانده میشوند؛ مقادیر موجود در مثلث دیگر، از نظر پیادهسازی تعریف شدهاند و میتوانند هر چیزی باشند.

اگر a بیشتر از ۲ بُعد داشته باشد، a به عنوان یک دسته از ماتریسها در نظر گرفته میشود، که در آن همه به جز ۲ بُعد فرعی، ابعاد دستهای هستند.

اگر a متقارن (هرمیتی) مثبت معین نباشد، نتیجه تعریفشده توسط پیادهسازی است.

برای اطلاعات StableHLO به StableHLO-cholesky مراجعه کنید.

گیره

همچنین به XlaBuilder::Clamp مراجعه کنید.

یک عملوند را در محدوده بین حداقل و حداکثر مقدار نگه میدارد.

Clamp(min, operand, max)

| استدلالها | نوع | معناشناسی |

|---|---|---|

min | XlaOp | آرایه از نوع T |

operand | XlaOp | آرایه از نوع T |

max | XlaOp | آرایه از نوع T |

با توجه به یک عملوند و مقادیر حداقل و حداکثر، اگر عملوند در محدوده بین حداقل و حداکثر باشد، مقدار حداقل را برمیگرداند، در غیر این صورت اگر عملوند کمتر از این محدوده باشد یا مقدار حداکثر را برمیگرداند اگر عملوند بالاتر از این محدوده باشد. یعنی، clamp(a, x, b) = min(max(a, x), b) .

هر سه آرایه باید شکل یکسانی داشته باشند. از طرف دیگر، به عنوان یک شکل محدود از پخش ، min و/یا max میتوانند یک اسکالر از نوع T باشند.

مثال با min و max اسکالر:

let operand: s32[3] = {-1, 5, 9};

let min: s32 = 0;

let max: s32 = 6;

==>

Clamp(min, operand, max) = s32[3]{0, 5, 6};

برای اطلاعات StableHLO به گیره StableHLO مراجعه کنید.

جمع کردن

همچنین به XlaBuilder::Collapse و عملیات tf.reshape مراجعه کنید.

ابعاد یک آرایه را به یک بعد تبدیل میکند.

Collapse(operand, dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایه از نوع T |

dimensions | بردار int64 | زیرمجموعهای متوالی و مرتب از ابعاد T. |

تابع Collapse زیرمجموعهی دادهشده از ابعاد عملوند را با یک بُعد واحد جایگزین میکند. آرگومانهای ورودی یک آرایهی دلخواه از نوع T و یک بردار ثابت زمان کامپایل از شاخصهای بُعد هستند. شاخصهای بُعد باید یک زیرمجموعهی متوالی و به ترتیب (اعداد با بُعد کم به زیاد) از ابعاد T باشند. بنابراین، {0، 1، 2}، {0، 1} یا {1، 2} همگی مجموعههای بُعد معتبری هستند، اما {1، 0} یا {0، 2} معتبر نیستند. آنها با یک بُعد جدید واحد، در همان موقعیت در دنباله بُعدهایی که جایگزین میشوند، جایگزین میشوند و اندازهی بُعد جدید برابر با حاصلضرب اندازههای بُعد اصلی است. کمترین عدد بُعد در dimensions ، کندترین بُعد متغیر (بزرگترین) در لانهی حلقه است که این ابعاد را جمع میکند و بالاترین عدد بُعد، سریعترین بُعد متغیر (کوچکترین) است. اگر به ترتیب کلیتر برای جمع کردن نیاز دارید، به عملگر tf.reshape مراجعه کنید.

برای مثال، فرض کنید v آرایهای با ۲۴ عنصر باشد:

let v = f32[4x2x3] { { {10, 11, 12}, {15, 16, 17} },

{ {20, 21, 22}, {25, 26, 27} },

{ {30, 31, 32}, {35, 36, 37} },

{ {40, 41, 42}, {45, 46, 47} } };

// Collapse to a single dimension, leaving one dimension.

let v012 = Collapse(v, {0,1,2});

then v012 == f32[24] {10, 11, 12, 15, 16, 17,

20, 21, 22, 25, 26, 27,

30, 31, 32, 35, 36, 37,

40, 41, 42, 45, 46, 47};

// Collapse the two lower dimensions, leaving two dimensions.

let v01 = Collapse(v, {0,1});

then v01 == f32[4x6] { {10, 11, 12, 15, 16, 17},

{20, 21, 22, 25, 26, 27},

{30, 31, 32, 35, 36, 37},

{40, 41, 42, 45, 46, 47} };

// Collapse the two higher dimensions, leaving two dimensions.

let v12 = Collapse(v, {1,2});

then v12 == f32[8x3] { {10, 11, 12},

{15, 16, 17},

{20, 21, 22},

{25, 26, 27},

{30, 31, 32},

{35, 36, 37},

{40, 41, 42},

{45, 46, 47} };

کلز

همچنین به XlaBuilder::Clz مراجعه کنید.

صفرهای پیشرو را به صورت عنصری بشمارید.

Clz(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

پخش جمعی

همچنین به XlaBuilder::CollectiveBroadcast مراجعه کنید.

دادهها را در سراسر کپیها پخش میکند. دادهها از اولین شناسه کپی در هر گروه به شناسههای دیگر در همان گروه ارسال میشوند. اگر شناسه کپی در هیچ گروه کپی نباشد، خروجی روی آن کپی، تانسوری متشکل از 0(ها) به shape است.

CollectiveBroadcast(operand, replica_groups, channel_id)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | عملوند تابع |

replica_groups | بردار ReplicaGroup | هر گروه شامل لیستی از شناسههای کپی است. |

channel_id | ChannelHandle اختیاری | شناسه منحصر به فرد برای هر جفت ارسال/دریافت |

برای اطلاعات StableHLO به StableHLO - collective_broadcast مراجعه کنید.

CollectivePermute

همچنین به XlaBuilder::CollectivePermute مراجعه کنید.

CollectivePermute یک عملیات جمعی است که دادهها را بین کپیها ارسال و دریافت میکند.

CollectivePermute(operand, source_target_pairs, channel_id)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | آرایه ورودی n بعدی |

source_target_pairs | <int64, int64> | فهرستی از جفتهای (source_replica_id, target_replica_id). برای هر جفت، عملوند از کپی منبع به کپی هدف ارسال میشود. |

channel_id | ChannelHandle اختیاری | شناسه کانال اختیاری برای ارتباط بین ماژولها |

توجه داشته باشید که محدودیتهای زیر در مورد source_target_pairs وجود دارد:

- هیچ دو جفتی نباید شناسه کپی هدف یکسانی داشته باشند و همچنین نباید شناسه کپی منبع یکسانی داشته باشند.

- اگر یک شناسه کپی، هدف هیچ جفتی نباشد، خروجی روی آن کپی، تنسوری متشکل از 0(ها) با همان شکل ورودی است.

رابط برنامهنویسی کاربردی (API) عملیات CollectivePermute به صورت داخلی به دو دستورالعمل HLO ( CollectivePermuteStart و CollectivePermuteDone ) تجزیه شده است.

همچنین HloInstruction::CreateCollectivePermuteStart مراجعه کنید.

CollectivePermuteStart و CollectivePermuteDone به عنوان مقادیر اولیه در HLO عمل میکنند. این عملیات ممکن است در فایلهای HLO ظاهر شوند، اما قرار نیست توسط کاربران نهایی به صورت دستی ساخته شوند.

برای اطلاعات StableHLO به StableHLO - collective_permute مراجعه کنید.

مقایسه

همچنین به XlaBuilder::Compare مراجعه کنید.

مقایسه عنصری lhs و rhs موارد زیر را انجام میدهد:

معادله

همچنین به XlaBuilder::Eq مراجعه کنید.

مقایسه تساوی lhs و rhs را بر اساس عنصر انجام میدهد.

\(lhs = rhs\)

Eq(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | ایکس لا اوپ | عملوند سمت چپ: آرایهای از نوع T |

| rhs | ایکس لا اوپ | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Eq:

Eq(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

Support a total order over the floating point numbers exists for Eq, by enforcing:

\[-NaN < -Inf < -Finite < -0 < +0 < +Finite < +Inf < +NaN.\]

EqTotalOrder(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

For StableHLO information see StableHLO - compare .

نه

See also XlaBuilder::Ne .

Performs element-wise not equal-to comparison of lhs and rhs .

\(lhs != rhs\)

Ne(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Ne:

Ne(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

Support a total order over the floating point numbers exists for Ne, by enforcing:

\[-NaN < -Inf < -Finite < -0 < +0 < +Finite < +Inf < +NaN.\]

NeTotalOrder(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

For StableHLO information see StableHLO - compare .

جی

See also XlaBuilder::Ge .

Performs element-wise greater-or-equal-than comparison of lhs and rhs .

\(lhs >= rhs\)

Ge(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Ge:

Ge(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

Support a total order over the floating point numbers exists for Gt, by enforcing:

\[-NaN < -Inf < -Finite < -0 < +0 < +Finite < +Inf < +NaN.\]

GtTotalOrder(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

For StableHLO information see StableHLO - compare .

جی تی

See also XlaBuilder::Gt .

Performs element-wise greater-than comparison of lhs and rhs .

\(lhs > rhs\)

Gt(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Gt:

Gt(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

For StableHLO information see StableHLO - compare .

لو

See also XlaBuilder::Le .

Performs element-wise less-or-equal-than comparison of lhs and rhs .

\(lhs <= rhs\)

Le(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Le:

Le(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

Support a total order over the floating point numbers exists for Le, by enforcing:

\[-NaN < -Inf < -Finite < -0 < +0 < +Finite < +Inf < +NaN.\]

LeTotalOrder(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

For StableHLO information see StableHLO - compare .

آن

See also XlaBuilder::Lt .

Performs element-wise less-than comparison of lhs and rhs .

\(lhs < rhs\)

Lt(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Lt:

Lt(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

Support a total order over the floating point numbers exists for Lt, by enforcing:

\[-NaN < -Inf < -Finite < -0 < +0 < +Finite < +Inf < +NaN.\]

LtTotalOrder(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

For StableHLO information see StableHLO - compare .

پیچیده

See also XlaBuilder::Complex .

Performs element-wise conversion to a complex value from a pair of real and imaginary values, lhs and rhs .

Complex(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Complex:

Complex(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

For StableHLO information see StableHLO - complex .

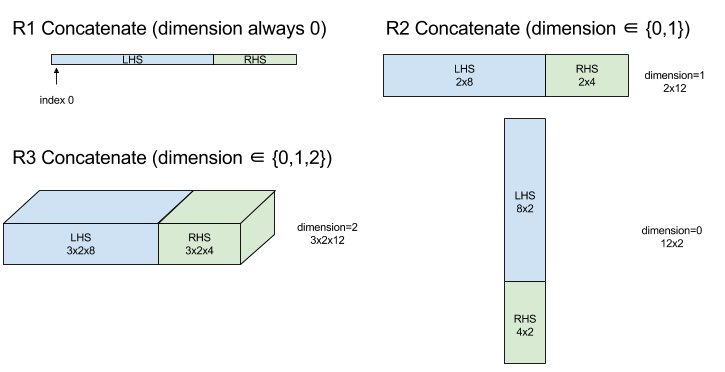

ConcatInDim (Concatenate)

See also XlaBuilder::ConcatInDim .

Concatenate composes an array from multiple array operands. The array has the same number of dimensions as each of the input array operands (which must have the same number of dimensions as each other) and contains the arguments in the order that they were specified.

Concatenate(operands..., dimension)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operands | sequence of N XlaOp | N arrays of type T with dimensions [L0, L1, ...]. Requires N >= 1. |

dimension | int64 | A value in the interval [0, N) that names the dimension to be concatenated between the operands . |

With the exception of dimension all dimensions must be the same. This is because XLA does not support "ragged" arrays. Also note that 0-dimensional values cannot be concatenated (as it's impossible to name the dimension along which the concatenation occurs).

1-dimensional example:

Concat({ {2, 3}, {4, 5}, {6, 7} }, 0)

//Output: {2, 3, 4, 5, 6, 7}

2-dimensional example:

let a = { {1, 2},

{3, 4},

{5, 6} };

let b = { {7, 8} };

Concat({a, b}, 0)

//Output: { {1, 2},

// {3, 4},

// {5, 6},

// {7, 8} }

Diagram:

For StableHLO information see StableHLO - concatenate .

Conditional

See also XlaBuilder::Conditional .

Conditional(predicate, true_operand, true_computation, false_operand, false_computation)

| استدلالها | نوع | معناشناسی |

|---|---|---|

predicate | XlaOp | Scalar of type PRED |

true_operand | XlaOp | Argument of type \(T_0\) |

true_computation | XlaComputation | XlaComputation of type \(T_0 \to S\) |

false_operand | XlaOp | Argument of type \(T_1\) |

false_computation | XlaComputation | XlaComputation of type \(T_1 \to S\) |

Executes true_computation if predicate is true , false_computation if predicate is false , and returns the result.

The true_computation must take in a single argument of type \(T_0\) and will be invoked with true_operand which must be of the same type. The false_computation must take in a single argument of type \(T_1\) and will be invoked with false_operand which must be of the same type. The type of the returned value of true_computation and false_computation must be the same.

Note that only one of true_computation and false_computation will be executed depending on the value of predicate .

Conditional(branch_index, branch_computations, branch_operands)

| استدلالها | نوع | معناشناسی |

|---|---|---|

branch_index | XlaOp | Scalar of type S32 |

branch_computations | sequence of N XlaComputation | XlaComputations of type \(T_0 \to S , T_1 \to S , ..., T_{N-1} \to S\) |

branch_operands | sequence of N XlaOp | Arguments of type \(T_0 , T_1 , ..., T_{N-1}\) |

Executes branch_computations[branch_index] , and returns the result. If branch_index is an S32 which is < 0 or >= N, then branch_computations[N-1] is executed as the default branch.

Each branch_computations[b] must take in a single argument of type \(T_b\) and will be invoked with branch_operands[b] which must be of the same type. The type of the returned value of each branch_computations[b] must be the same.

Note that only one of the branch_computations will be executed depending on the value of branch_index .

For StableHLO information see StableHLO - if .

ثابت

See also XlaBuilder::ConstantLiteral .

Produces an output from a constant literal .

Constant(literal)

| استدلالها | نوع | معناشناسی |

|---|---|---|

literal | LiteralSlice | constant view of an existing Literal |

For StableHLO information see StableHLO - constant .

ConvertElementType

See also XlaBuilder::ConvertElementType .

Similar to an element-wise static_cast in C++, ConvertElementType performs an element-wise conversion operation from a data shape to a target shape. The dimensions must match, and the conversion is an element-wise one; eg s32 elements become f32 elements via an s32 -to- f32 conversion routine.

ConvertElementType(operand, new_element_type)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | array of type T with dims D |

new_element_type | PrimitiveType | type U |

The dimensions of the operand and the target shape must match. The source and destination element types must not be tuples.

A conversion such as T=s32 to U=f32 will perform a normalizing int-to-float conversion routine such as round-to-nearest-even.

let a: s32[3] = {0, 1, 2};

let b: f32[3] = convert(a, f32);

then b == f32[3]{0.0, 1.0, 2.0}

For StableHLO information see StableHLO - convert .

Conv (Convolution)

See also XlaBuilder::Conv .

Computes a convolution of the kind used in neural networks. Here, a convolution can be thought of as a n-dimensional window moving across a n-dimensional base area and a computation is performed for each possible position of the window.

Conv Enqueues a convolution instruction onto the computation, which uses the default convolution dimension numbers with no dilation.

The padding is specified in a short-hand way as either SAME or VALID. SAME padding pads the input ( lhs ) with zeroes so that the output has the same shape as the input when not taking striding into account. VALID padding simply means no padding.

Conv(lhs, rhs, window_strides, padding, feature_group_count, batch_group_count, precision_config, preferred_element_type)

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | (n+2)-dimensional array of inputs |

rhs | XlaOp | (n+2)-dimensional array of kernel weights |

window_strides | ArraySlice<int64> | nd array of kernel strides |

padding | Padding | enum of padding |

feature_group_count | int64 | the number of feature groups |

batch_group_count | int64 | the number of batch groups |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

Increasing levels of controls are available for Conv :

Let n be the number of spatial dimensions. The lhs argument is an (n+2)-dimensional array describing the base area. This is called the input, even though of course the rhs is also an input. In a neural network, these are the input activations. The n+2 dimensions are, in this order:

-

batch: Each coordinate in this dimension represents an independent input for which convolution is carried out. -

z/depth/features: Each (y,x) position in the base area has a vector associated to it, which goes into this dimension. -

spatial_dims: Describes thenspatial dimensions that define the base area that the window moves across.

The rhs argument is an (n+2)-dimensional array describing the convolutional filter/kernel/window. The dimensions are, in this order:

-

output-z: Thezdimension of the output. -

input-z: The size of this dimension timesfeature_group_countshould equal the size of thezdimension in lhs. -

spatial_dims: Describes thenspatial dimensions that define the nd window that moves across the base area.

The window_strides argument specifies the stride of the convolutional window in the spatial dimensions. For example, if the stride in the first spatial dimension is 3, then the window can only be placed at coordinates where the first spatial index is divisible by 3.

The padding argument specifies the amount of zero padding to be applied to the base area. The amount of padding can be negative -- the absolute value of negative padding indicates the number of elements to remove from the specified dimension before doing the convolution. padding[0] specifies the padding for dimension y and padding[1] specifies the padding for dimension x . Each pair has the low padding as the first element and the high padding as the second element. The low padding is applied in the direction of lower indices while the high padding is applied in the direction of higher indices. For example, if padding[1] is (2,3) then there will be a padding by 2 zeroes on the left and by 3 zeroes on the right in the second spatial dimension. Using padding is equivalent to inserting those same zero values into the input ( lhs ) before doing the convolution.

The lhs_dilation and rhs_dilation arguments specify the dilation factor to be applied to the lhs and rhs, respectively, in each spatial dimension. If the dilation factor in a spatial dimension is d, then d-1 holes are implicitly placed between each of the entries in that dimension, increasing the size of the array. The holes are filled with a no-op value, which for convolution means zeroes.

Dilation of the rhs is also called atrous convolution. For more details, see tf.nn.atrous_conv2d . Dilation of the lhs is also called transposed convolution. For more details, see tf.nn.conv2d_transpose .

The feature_group_count argument (default value 1) can be used for grouped convolutions. feature_group_count needs to be a divisor of both the input and the output feature dimension. If feature_group_count is greater than 1, it means that conceptually the input and output feature dimension and the rhs output feature dimension are split evenly into many feature_group_count groups, each group consisting of a consecutive subsequence of features. The input feature dimension of rhs needs to be equal to the lhs input feature dimension divided by feature_group_count (so it already has the size of a group of input features). The i-th groups are used together to compute feature_group_count for many separate convolutions. The results of these convolutions are concatenated together in the output feature dimension.

For depthwise convolution the feature_group_count argument would be set to the input feature dimension, and the filter would be reshaped from [filter_height, filter_width, in_channels, channel_multiplier] to [filter_height, filter_width, 1, in_channels * channel_multiplier] . For more details, see tf.nn.depthwise_conv2d .

The batch_group_count (default value 1) argument can be used for grouped filters during backpropagation. batch_group_count needs to be a divisor of the size of the lhs (input) batch dimension. If batch_group_count is greater than 1, it means that the output batch dimension should be of size input batch / batch_group_count . The batch_group_count must be a divisor of the output feature size.

The output shape has these dimensions, in this order:

-

batch: The size of this dimension timesbatch_group_countshould equal the size of thebatchdimension in lhs. -

z: Same size asoutput-zon the kernel (rhs). -

spatial_dims: One value for each valid placement of the convolutional window.

The figure above shows how the batch_group_count field works. Effectively, we slice each lhs batch into batch_group_count groups, and do the same for the output features. Then, for each of these groups we do pairwise convolutions and concatenate the output along the output feature dimension. The operational semantics of all the other dimensions (feature and spatial) remain the same.

The valid placements of the convolutional window are determined by the strides and the size of the base area after padding.

To describe what a convolution does, consider a 2d convolution, and pick some fixed batch , z , y , x coordinates in the output. Then (y,x) is a position of a corner of the window within the base area (eg the upper left corner, depending on how you interpret the spatial dimensions). We now have a 2d window, taken from the base area, where each 2d point is associated to a 1d vector, so we get a 3d box. From the convolutional kernel, since we fixed the output coordinate z , we also have a 3d box. The two boxes have the same dimensions, so we can take the sum of the element-wise products between the two boxes (similar to a dot product). That is the output value.

Note that if output-z is eg, 5, then each position of the window produces 5 values in the output into the z dimension of the output. These values differ in what part of the convolutional kernel is used - there is a separate 3d box of values used for each output-z coordinate. So you could think of it as 5 separate convolutions with a different filter for each of them.

Here is pseudo-code for a 2d convolution with padding and striding:

for (b, oz, oy, ox) { // output coordinates

value = 0;

for (iz, ky, kx) { // kernel coordinates and input z

iy = oy*stride_y + ky - pad_low_y;

ix = ox*stride_x + kx - pad_low_x;

if ((iy, ix) inside the base area considered without padding) {

value += input(b, iz, iy, ix) * kernel(oz, iz, ky, kx);

}

}

output(b, oz, oy, ox) = value;

}

precision_config is used to indicate the precision configuration. The level dictates whether hardware should attempt to generate more machine code instructions to provide more accurate dtype emulation when needed (ie emulating f32 on a TPU that only supports bf16 matmuls). Values may be DEFAULT , HIGH , HIGHEST . Additional details in the MXU sections .

preferred_element_type is a scalar element of higher/lower precision output types used for accumulation. preferred_element_type recommends the accumulation type for the given operation, however it is not guaranteed. This allows for some hardware backends to instead accumulate in a different type and convert to the preferred output type.

For StableHLO information see StableHLO - convolution .

ConvWithGeneralPadding

See also XlaBuilder::ConvWithGeneralPadding .

ConvWithGeneralPadding(lhs, rhs, window_strides, padding, feature_group_count, batch_group_count, precision_config, preferred_element_type)

Same as Conv where padding configuration is explicit.

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | (n+2)-dimensional array of inputs |

rhs | XlaOp | (n+2)-dimensional array of kernel weights |

window_strides | ArraySlice<int64> | nd array of kernel strides |

padding | ArraySlice< pair<int64,int64>> | nd array of (low, high) padding |

feature_group_count | int64 | the number of feature groups |

batch_group_count | int64 | the number of batch groups |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

ConvWithGeneralDimensions

See also XlaBuilder::ConvWithGeneralDimensions .

ConvWithGeneralDimensions(lhs, rhs, window_strides, padding, dimension_numbers, feature_group_count, batch_group_count, precision_config, preferred_element_type)

Same as Conv where dimension numbers are explicit.

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | (n+2)-dimensional array of inputs |

rhs | XlaOp | (n+2)-dimensional array of kernel weights |

window_strides | ArraySlice<int64> | nd array of kernel strides |

padding | Padding | enum of padding |

dimension_numbers | ConvolutionDimensionNumbers | the number of dimensions |

feature_group_count | int64 | the number of feature groups |

batch_group_count | int64 | the number of batch groups |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

ConvGeneral

See also XlaBuilder::ConvGeneral .

ConvGeneral(lhs, rhs, window_strides, padding, dimension_numbers, feature_group_count, batch_group_count, precision_config, preferred_element_type)

Same as Conv where dimension numbers and padding configuration is explicit

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | (n+2)-dimensional array of inputs |

rhs | XlaOp | (n+2)-dimensional array of kernel weights |

window_strides | ArraySlice<int64> | nd array of kernel strides |

padding | ArraySlice< pair<int64,int64>> | nd array of (low, high) padding |

dimension_numbers | ConvolutionDimensionNumbers | the number of dimensions |

feature_group_count | int64 | the number of feature groups |

batch_group_count | int64 | the number of batch groups |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

ConvGeneralDilated

See also XlaBuilder::ConvGeneralDilated .

ConvGeneralDilated(lhs, rhs, window_strides, padding, lhs_dilation, rhs_dilation, dimension_numbers, feature_group_count, batch_group_count, precision_config, preferred_element_type, window_reversal)

Same as Conv where padding configuration, dilation factors, and dimension numbers are explicit.

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | (n+2)-dimensional array of inputs |

rhs | XlaOp | (n+2)-dimensional array of kernel weights |

window_strides | ArraySlice<int64> | nd array of kernel strides |

padding | ArraySlice< pair<int64,int64>> | nd array of (low, high) padding |

lhs_dilation | ArraySlice<int64> | nd lhs dilation factor array |

rhs_dilation | ArraySlice<int64> | nd rhs dilation factor array |

dimension_numbers | ConvolutionDimensionNumbers | the number of dimensions |

feature_group_count | int64 | the number of feature groups |

batch_group_count | int64 | the number of batch groups |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

window_reversal | optional vector<bool> | flag used to logically reverse dimension before applying the convolution |

کپی

See also HloInstruction::CreateCopyStart .

Copy is internally decomposed into 2 HLO instructions CopyStart and CopyDone . Copy along with CopyStart and CopyDone serve as primitives in HLO. These ops may appear in HLO dumps, but they are not intended to be constructed manually by end users.

Cos

See also XlaBuilder::Cos .

Element-wise cosine x -> cos(x) .

Cos(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | The operand to the function |

Cos also supports the optional result_accuracy argument:

Cos(operand, result_accuracy)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | The operand to the function |

result_accuracy | optional ResultAccuracy | The types of accuracy the user can request for unary ops with multiple implementations |

For more information on result_accuracy see Result Accuracy .

For StableHLO information see StableHLO - cosine .

Cosh

See also XlaBuilder::Cosh .

Element-wise hyperbolic cosine x -> cosh(x) .

Cosh(operand)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | The operand to the function |

Cosh also supports the optional result_accuracy argument:

Cosh(operand, result_accuracy)

| استدلالها | نوع | معناشناسی |

|---|---|---|

operand | XlaOp | The operand to the function |

result_accuracy | optional ResultAccuracy | The types of accuracy the user can request for unary ops with multiple implementations |

For more information on result_accuracy see Result Accuracy .

CustomCall

See also XlaBuilder::CustomCall .

Call a user-provided function within a computation.

CustomCall documentation is provided in Developer details - XLA Custom Calls

For StableHLO information see StableHLO - custom_call .

دیو

See also XlaBuilder::Div .

Performs element-wise division of dividend lhs and divisor rhs .

Div(lhs, rhs)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

Integer division overflow (signed/unsigned division/remainder by zero or signed division/remainder of INT_SMIN with -1 ) produces an implementation defined value.

The arguments' shapes have to be either similar or compatible. See the broadcasting documentation about what it means for shapes to be compatible. The result of an operation has a shape which is the result of broadcasting the two input arrays. In this variant, operations between arrays of different ranks are not supported, unless one of the operands is a scalar.

An alternative variant with different-dimensional broadcasting support exists for Div:

Div(lhs,rhs, broadcast_dimensions)

| استدلالها | نوع | معناشناسی |

|---|---|---|

| ل اچ اس | XlaOp | Left-hand-side operand: array of type T |

| rhs | XlaOp | Left-hand-side operand: array of type T |

| broadcast_dimension | ArraySlice | Which dimension in the target shape each dimension of the operand shape corresponds to |

This variant of the operation should be used for arithmetic operations between arrays of different ranks (such as adding a matrix to a vector).

The additional broadcast_dimensions operand is a slice of integers specifying the dimensions to use for broadcasting the operands. The semantics are described in detail on the broadcasting page .

For StableHLO information see StableHLO - divide .

دامنه

See also HloInstruction::CreateDomain .

Domain may appear in HLO dumps, but it is not intended to be constructed manually by end users.

نقطه

See also XlaBuilder::Dot .

Dot(lhs, rhs, precision_config, preferred_element_type)

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | array of type T |

rhs | XlaOp | array of type T |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

The exact semantics of this operation depend on the ranks of the operands:

| ورودی | خروجی | معناشناسی |

|---|---|---|

vector [n] dot vector [n] | اسکالر | vector dot product |

matrix [mxk] dot vector [k] | vector [m] | matrix-vector multiplication |

matrix [mxk] dot matrix [kxn] | matrix [mxn] | matrix-matrix multiplication |

The operation performs sum of products over the second dimension of lhs (or the first if it has 1 dimension) and the first dimension of rhs . These are the "contracted" dimensions. The contracted dimensions of lhs and rhs must be of the same size. In practice, it can be used to perform dot products between vectors, vector/matrix multiplications or matrix/matrix multiplications.

precision_config is used to indicate the precision configuration. The level dictates whether hardware should attempt to generate more machine code instructions to provide more accurate dtype emulation when needed (ie emulating f32 on a TPU that only supports bf16 matmuls). Values may be DEFAULT , HIGH , HIGHEST . Additional details in the MXU sections .

preferred_element_type is a scalar element of higher/lower precision output types used for accumulation. preferred_element_type recommends the accumulation type for the given operation, however it is not guaranteed. This allows for some hardware backends to instead accumulate in a different type and convert to the preferred output type.

For StableHLO information see StableHLO - dot .

DotGeneral

See also XlaBuilder::DotGeneral .

DotGeneral(lhs, rhs, dimension_numbers, precision_config, preferred_element_type)

| استدلالها | نوع | معناشناسی |

|---|---|---|

lhs | XlaOp | array of type T |

rhs | XlaOp | array of type T |

dimension_numbers | DotDimensionNumbers | contracting and batch dimension numbers |

precision_config | optional PrecisionConfig | enum for level of precision |

preferred_element_type | optional PrimitiveType | enum of scalar element type |

Similar to Dot, but allows contracting and batch dimension numbers to be specified for both the lhs and rhs .

| DotDimensionNumbers Fields | نوع | معناشناسی |

|---|---|---|

lhs_contracting_dimensions | repeated int64 | lhs contracting dimension numbers |

rhs_contracting_dimensions | repeated int64 | rhs contracting dimension numbers |

lhs_batch_dimensions | repeated int64 | lhs batch dimension numbers |

rhs_batch_dimensions | repeated int64 | rhs batch dimension numbers |

DotGeneral performs the sum of products over contracting dimensions specified in dimension_numbers .

Associated contracting dimension numbers from the lhs and rhs do not need to be the same but must have the same dimension sizes.

Example with contracting dimension numbers:

lhs = { {1.0, 2.0, 3.0},

{4.0, 5.0, 6.0} }

rhs = { {1.0, 1.0, 1.0},

{2.0, 2.0, 2.0} }

DotDimensionNumbers dnums;

dnums.add_lhs_contracting_dimensions(1);

dnums.add_rhs_contracting_dimensions(1);

DotGeneral(lhs, rhs, dnums) -> { { 6.0, 12.0},

{15.0, 30.0} }

Associated batch dimension numbers from the lhs and rhs must have the same dimension sizes.

Example with batch dimension numbers (batch size 2, 2x2 matrices):

lhs = { { {1.0, 2.0},

{3.0, 4.0} },

{ {5.0, 6.0},

{7.0, 8.0} } }

rhs = { { {1.0, 0.0},

{0.0, 1.0} },

{ {1.0, 0.0},

{0.0, 1.0} } }

DotDimensionNumbers dnums;

dnums.add_lhs_contracting_dimensions(2);

dnums.add_rhs_contracting_dimensions(1);

dnums.add_lhs_batch_dimensions(0);

dnums.add_rhs_batch_dimensions(0);

DotGeneral(lhs, rhs, dnums) -> {

{ {1.0, 2.0},

{3.0, 4.0} },

{ {5.0, 6.0},

{7.0, 8.0} } }

| ورودی | خروجی | معناشناسی |

|---|---|---|

[b0, m, k] dot [b0, k, n] | [b0, m, n] | batch matmul |

[b0, b1, m, k] dot [b0, b1, k, n] | [b0, b1, m, n] | batch matmul |

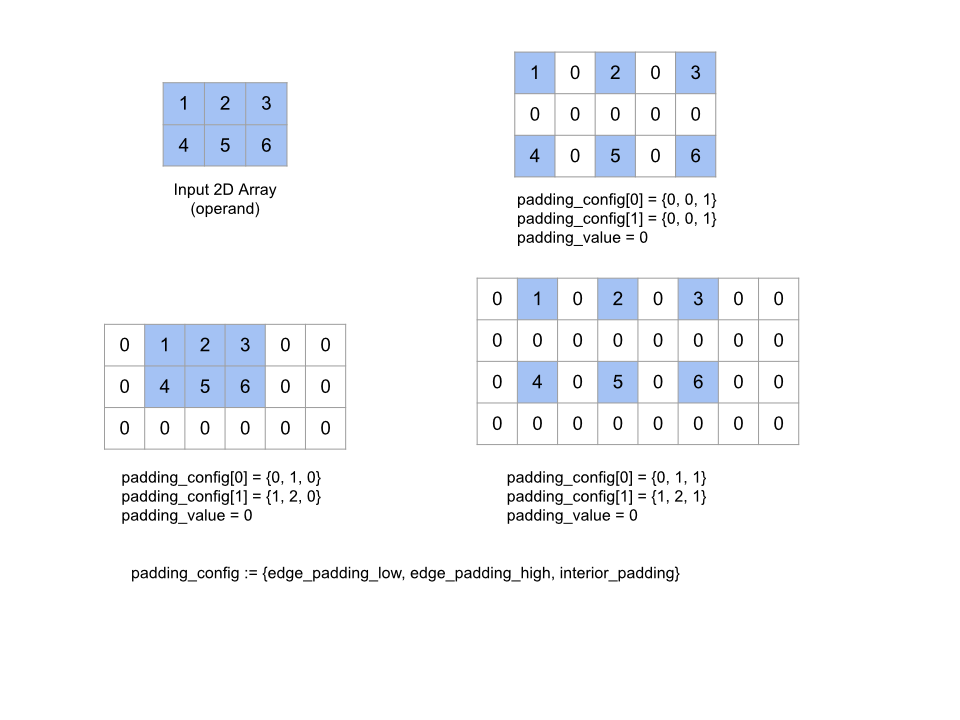

It follows that the resulting dimension number starts with the batch dimension, then the lhs non-contracting/non-batch dimension, and finally the rhs non-contracting/non-batch dimension.